How to Use AI for Research: Fact-Checking, Summaries & Smart Outlines That Actually Hold Up

A practical, no-fluff guide for PhD students, analysts, academics, and knowledge workers who want to use AI without embarrassing themselves — or their institutions.

Thank you for reading this post, don't forget to subscribe!

Table of Contents

- The Story Nobody Talks About

- What Does It Actually Mean to Use AI for Research?

- 7 Research Mistakes People Make With AI

- How to Use AI for Research: The Step-by-Step Workflow

- The AI Research Workflow Diagram

- AI Tool Comparison Table

- How to Use AI for Research in Systematic Reviews

- How to Use AI for Research Without Violating Academic Integrity

- Ethical Risks, Bias in Training Data, and What Integrity Actually Demands

- How Researchers in Non-English-Speaking Countries Can Use AI to Close the Access Gap

- Where AI-Powered Research Is Actually Heading

- Frequently Asked Questions

- Conclusion

The Story Nobody Talks About

Six citations. Zero real cases. A courtroom full of silence.

In 2023, a New York law firm submitted a court brief citing six legal precedents. The citations looked impeccable — real-sounding case names, plausible courts, plausible years, formatted correctly. The opposing counsel flagged something odd. The judge looked them up.

Every single citation was fabricated. A lawyer had used ChatGPT to help build the brief and never verified a single output. The firm faced sanctions. The story made international news.

And then it kept happening.

To researchers. To journalists. To PhD students submitting dissertations. To analysts at firms whose clients trusted their work. The settings changed. The mistake stayed identical.

That is the world we are in. Knowing how to use AI for research is now one of the most consequential professional skills of this decade — and most people are doing it wrong. Not because the tools are bad. Because they are using powerful tools without understanding where those tools break down.

This guide fixes that. Practically. Specifically. Without the usual enthusiasm that quietly skips over the parts that get people into trouble.

We cover the AI-assisted research workflow from start to finish: how to fact-check with AI without being misled by it, how to use AI to summarize research papers without losing the nuance that makes a summary honest, how to build outlines that actually reflect the literature, and how to verify citations so you never find yourself in a courtroom — or a thesis defense — defending something that does not exist.

We also cover what most guides skip: the systematic bias in AI training data, real hallucination rates across current tools, how researchers in non-English-speaking countries can use AI to access global scholarship, and what responsible AI use in academia actually demands in 2026.

Stanford HAI’s ongoing research into AI in knowledge work has consistently documented that even high-performing models introduce factual slippage when synthesizing across multiple documents. This is not a fringe concern. It is the norm. And it is exactly why this guide exists.

What Does It Actually Mean to Use AI in Academic Research?

People use the phrase constantly. But it covers a huge range of things, and the range matters enormously.

Asking ChatGPT a question is not AI-assisted research. Pasting an abstract and asking for a summary is not either. Those are uses of AI. They are not research workflows.

Real AI-assisted research is a structured process where AI tools handle specific, bounded tasks within a workflow that a human expert controls from start to finish. The AI accelerates. The researcher directs, evaluates, and validates.

What AI genuinely does well in a research context:

- Processing large volumes of text at a speed no human can match at scale

- Identifying recurring themes or contradictions across multiple documents

- Rewriting dense academic prose into plain language without losing the core meaning

- Helping non-native English speakers engage with global scholarship in other languages

- Generating structured outlines and literature maps to orient a new research project

- Flagging where an argument has logical gaps or missing counterarguments

What AI cannot do reliably — regardless of which model you use:

- Verify that a cited paper actually exists in any real academic database

- Guarantee that a reproduced statistic is accurate and contextually correct

- Know about research published after its training cutoff date

- Understand the political or cultural nuance of research it was not trained on

- Tell you clearly when it is confidently, completely wrong

That last point is the dangerous one. When a model hallucinates, it does not pause and flag uncertainty. It continues with exactly the same confident tone it uses when it is completely right. There is no signal. The text just flows. And it looks exactly like the truth.

The mindset shift you need from the start: AI-assisted research means using AI to move faster, not to move without checking.

7 Research Mistakes People Make With AI (And How to Avoid Every Single One)

Before the workflow, let’s clear the field. These are the mistakes that damage careers.

Mistake 1 — Trusting AI citations blindly. AI models fabricate citations regularly. They look real. They are not. Verify every single one in an original academic database before it touches your draft. No exceptions, ever.

Mistake 2 — Summarizing a paper by title only. Asking AI to summarize a paper without providing the actual text produces confident, plausible fabrication. Always paste the content you want analyzed. A title is not enough.

Mistake 3 — Ignoring the training cutoff. Most models are 12 to 18 months behind the current date. Any paper, dataset, or development published after that cutoff is invisible to the model — and it will not always tell you this. Check the recency of whatever you need, independently.

Mistake 4 — Skipping the methodology check. AI summaries make findings sound cleaner and more definitive than the authors actually claimed. For anything you plan to cite directly, read the methodology section yourself. No shortcut replaces this.

Mistake 5 — Using AI before forming your own hypothesis. If AI shapes your research question before you have engaged with the field yourself, you end up with a question framed by the model’s training biases — not by your expertise. Form your own angle first. Use AI to stress-test it.

Mistake 6 — Over-automating the literature review. AI can help you map and screen literature at scale. It cannot assess study quality, resolve methodological ambiguity, or catch the significance of a subtle inconsistency across papers. Over-automation means missing what matters most.

Mistake 7 — Not disclosing AI use. As of 2026, most major journals require explicit disclosure of AI tool use in submitted work. Many institutions go further. Not disclosing is an integrity issue, not a technicality. Know your institution’s current rules and follow them.

How to Use AI for Research: The Step-by-Step Workflow

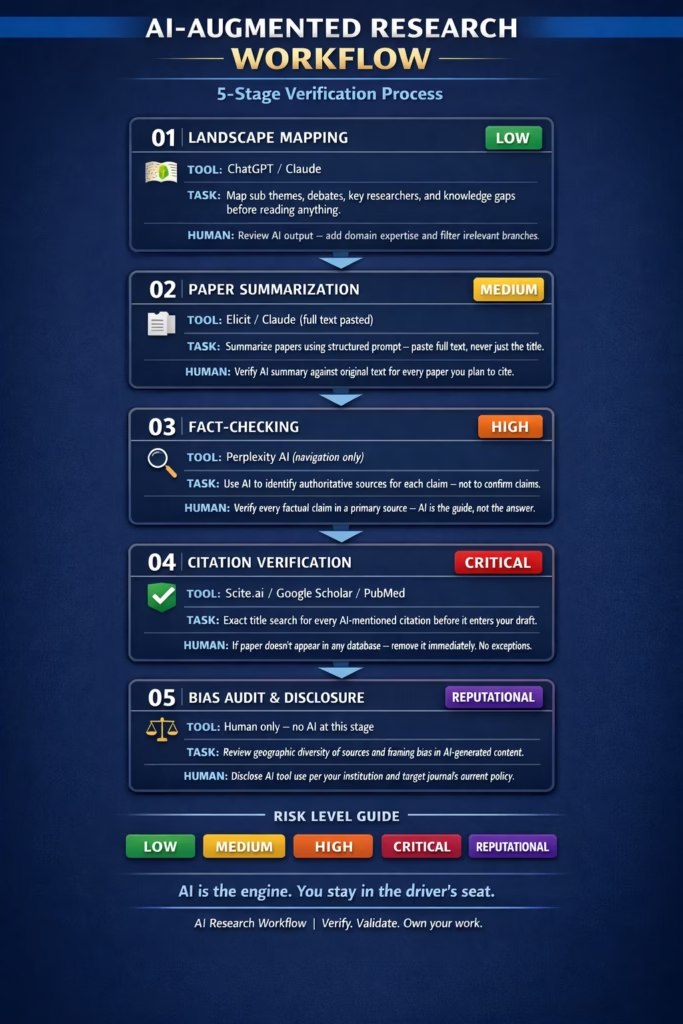

Each stage of the research process has a different risk profile. Here is how to handle each one with the right tools and the right level of caution.

Stage One: Fact-Checking with AI — Use It as a Direction-Finder, Not a Source

Here is a mistake that costs researchers their credibility: asking AI whether something is true.

“Is it true that global plastic recycling rates hover around 9%?” The model confirms this confidently. But where did that number come from? A blend of sources, compressed into training weights, with no audit trail you can follow. It might be accurate. It might also be slightly off, or right about the number but wrong about the specific baseline year or methodology used to calculate it.

The correct approach is to use AI as a direction-finder — not as the fact itself.

Picture this scenario. You are writing a public health paper on child malnutrition rates in Sub-Saharan Africa. You find a statistic: 45% of child deaths in the region are linked to undernutrition. You paste it to ChatGPT and ask whether it is accurate. The model says yes, confidently, with context that sounds authoritative. But what you actually needed was: which organization published this figure, in which year, using which methodology, and whether more recent data has revised it. Those questions are fundamentally different from “is this true” — and AI handles them very differently.

The prompt that changes everything:

"I've come across this claim: [PASTE CLAIM]. Rather than confirming whether it is accurate, I want you to: 1. Tell me which field or domain this falls under 2. Name the most authoritative sources or institutions that publish data on this topic 3. Suggest 3 specific search terms I should use in Google Scholar, PubMed, or WHO databases to verify this 4. Flag any part of this claim where your confidence is low or where definitions might vary significantly."

That reframes the AI from fact-checker to research navigator. It is a much more honest relationship with the tool — and a dramatically safer one.

Then take those suggested paths and go to the primary sources yourself. WHO reports. UN datasets. Peer-reviewed journals. Government statistical offices. Read the original. Do not trust AI’s description of what a source says; read the source itself.

Tools like Perplexity AI make initial navigation somewhat easier because they include clickable citation links alongside responses. Even then — click through every time. Do not assume the quote is accurate or in context.

Stage Two: Using AI to Summarize Research Papers Without Losing the Truth

AI is very good at summaries. Almost too good. That is part of the problem.

Research papers are deliberately uncertain. They hedge claims, acknowledge limitations, and contain contradictions that survive in the original but disappear in compression. When a model summarizes a paper, it tends to make findings sound cleaner, more conclusive, and more universal than the authors actually intended.

A 2023 study published in PLOS ONE examining AI-generated biomedical summaries found that over 60% contained at least one meaningful inaccuracy — not outright fabrication, but distortions of emphasis, scope, or certainty. The model was not lying. It was averaging. And averaging research findings is itself a form of distortion. Nature has raised similar concerns about multi-paper AI synthesis, noting that small misrepresentations at the individual summary level compound across sources until the final output is technically sentence-level accurate but contextually misleading as a whole.

Here is what that looks like in practice. Imagine you are researching the effectiveness of community health workers in rural Kenya. You ask AI to summarize three relevant papers. The first found strong effects in one district. The second found mixed results in a different region with different infrastructure. The third raised methodological concerns about outcome measurement. AI synthesizes all three into: “Community health workers have shown strong effectiveness in rural Kenya.” Technically supported by paper one. Misleading as a description of what the three papers actually show together.

The fix: always feed the model the actual text, not just the title.

Asking AI to summarize a paper it has not read produces plausible fabrication. Paste the full text every single time.

Use a structured summary prompt:

"I am going to paste the full text of a research paper. Please summarize it using this exact structure: 1. Core research question (one sentence) 2. Study design and methodology (3-4 sentences maximum) 3. Key findings — bullet points, quoting specific numbers exactly as they appear in the paper 4. Limitations explicitly acknowledged by the authors 5. What the authors say their findings do NOT prove 6. One paragraph on how this connects to: [YOUR SPECIFIC TOPIC] Do not add information that is not in the paper. If you are uncertain about any section, say so explicitly. Here is the paper: [PASTE FULL TEXT]"

Point five is the most important one people skip. Asking the model to articulate what the paper does not prove forces it to represent the authors’ caveats honestly rather than defaulting to the most confident reading of the findings.

The bias problem that deserves more attention.

Most large language models are trained predominantly on English-language text, with heavy overrepresentation of North American and European academic sources. Research from South Asia, sub-Saharan Africa, Southeast Asia, and Latin America is systematically underrepresented in training corpora.

In practice, when AI summarizes “the literature” on a topic, it may be drawing on a geographically narrow slice of global scholarship — without flagging this. For a researcher in Nigeria, India, or Brazil, the AI’s framing of debates may not reflect the most relevant evidence for their context. For anyone studying global or cross-cultural topics, this framing bias is a meaningful methodological concern, not a footnote.

The response is not to stop using AI summaries. It is to supplement them with targeted searches in regional databases, and to read the original source for anything you plan to cite directly.

Stage Three: Building Research Outlines That Are Actually Useful

This is where AI adds the most reliable value with the lowest hallucination risk. Outlines are generative, not factual. The model is helping you structure thinking and map terrain — tasks where its broad training is an asset rather than a liability.

Start broad. Get the landscape.

"I am starting a research project on [TOPIC]. Before I read anything, I want to understand the landscape. Please give me: - The 5-6 major sub-themes within this field - 3 ongoing debates or unresolved questions scholars are actively working through - Names of 4-5 researchers or groups considered influential right now - 2-3 areas that appear under-researched or genuinely contested Be specific. Avoid generic summaries."

Review what the AI produces. Add your own instincts. Remove anything that does not fit your angle. This output is not your research — it is your orientation before you begin reading.

Then get a working structure.

"Given this research question: [YOUR SPECIFIC QUESTION], help me draft a detailed outline for a literature review. Each section should include: - A clear focus for that section - 2-3 questions that section needs to answer - A note on the type of sources most relevant (systematic reviews, empirical studies, meta-analyses, etc.) Format this as a working document, not a finished structure."

The phrase “working document, not a finished structure” matters more than it looks. It signals that you want exploratory scaffolding, not a polished plan. The outputs are consistently more honest and more useful as a result.

After two weeks of actual reading, come back. Paste your notes. Ask: “Here is what I have covered so far. What angles or counterarguments am I missing?” This iterative use — making AI identify your own blind spots — is where the AI workflow for research writing truly earns its place.

Stage Four: Source Verification and AI Citation Checking — The Part That Can End Careers

We need to be completely direct about this.

AI fabricates citations. Not occasionally. Regularly. And they look convincing.

A hallucinated citation will have a plausible author name, a real-sounding journal, a reasonable title, and a year that fits the context. It will not exist in any database. And if you submit it — in a thesis, a paper, a grant application, a report — you are responsible for that error. Not the AI. You.

The documented cases extend far beyond law. Academic papers have been retracted. Journalists have issued formal corrections. Researchers have had to post public retractions on institutional websites. All because they used AI for citations and never verified the output.

The verification workflow that actually holds up:

- Every citation the AI mentions goes into Google Scholar, PubMed, or Semantic Scholar as an exact title search — every single one, no exceptions

- If the paper appears, verify that the journal, year, and authors exactly match what the AI said

- Click through to the abstract and confirm the AI’s description of the findings accurately reflects what the paper actually claims

- If the paper does not appear after multiple search variations, treat it as fabricated and remove it immediately

For systematic AI citation verification at scale:

Scite.ai is the most sophisticated purpose-built tool currently available. It does not just confirm a paper exists — it shows you how subsequent research has engaged with it. Supporting citations, contrasting citations, mentioning citations. A paper cited 200 times with 40% contrasting citations tells a fundamentally different story than citation count alone suggests.

Elicit is built specifically for the AI literature review process. Submit a research question and receive structured summaries grounded in actual indexed papers. It has coverage gaps — particularly for recent preprints and non-English sources — but it is designed around research integrity in a way that general-purpose models are not.

Stage Five: Understanding and Reducing AI Hallucinations in Research

Hallucination is not a bug waiting to be patched. It is a fundamental characteristic of how these models generate text.

Large language models predict the most plausible next token given everything that came before. When they do not have reliable training data for something, they do not stop. They generate the most plausible continuation. Which can sound, very often, exactly like the truth.

Hallucination rates vary across tools and are heavily context-dependent. A 2024 benchmarking analysis examining GPT-4, Claude, and Gemini across structured research tasks — conducted by researchers at the AI2 Institute — found that models performed significantly better when given source text to work from compared to open recall tasks. The gap was largest on niche, technical, or recent topics: precisely where researchers most need accuracy.

How to build hallucination resistance into every research task:

- Feed the model text, not titles. Always paste what you want analyzed

- Ask explicitly for uncertainty flags in every prompt: “If you are not confident in any part of this, say so and explain why”

- Use RAG-enabled tools where available — they retrieve actual documents before generating responses, dramatically reducing fabrication rates

- Never carry specific numbers, dates, or proper nouns from AI output into your draft without independent verification

- Treat citations, statistics, and named researchers with particular suspicion — these are the most common fabrication zones

A non-negotiable pre-submission checklist:

- [ ] Every citation verified in an original academic database?

- [ ] Every statistic traced to a primary published source?

- [ ] AI summaries compared against original paper text for key claims?

- [ ] Geographic and cultural bias in AI’s framing of the topic considered?

- [ ] AI use disclosed in accordance with institutional and journal policy?

If any of those are unchecked — the work is not ready.

The AI Research Workflow: 5-Stage Visual Guide

The same workflow as pseudocode:

AI_RESEARCH_WORKFLOW(topic, research_question): // Stage 1 — Landscape mapping terrain = AI.prompt("Major themes, debates, gaps in: " + topic) outline = AI.prompt("Lit review structure for: " + research_question) // Stage 2 — Paper summarization FOR each section IN outline: papers = Scholar.search(section.focus_keywords) FOR each paper IN papers: text = PDF.extract(paper) summary= AI.summarize(text, structured_prompt) human.verify(summary, text) // non-negotiable // Stage 3 — Fact-checking FOR each claim IN draft: paths = AI.suggest_verification_sources(claim) confirmed= human.check_primary_sources(paths) IF NOT confirmed: flag_claim(claim) // Stage 4 — Citation validation FOR each citation IN draft: exists = Scite.lookup(citation) OR Scholar.exact_title(citation) IF NOT exists: REMOVE(citation) + LOG("fabricated") // Stage 5 — Bias + disclosure audit human.review(geographic_diversity, source_language_mix) human.complete_disclosure_requirements() RETURN verified_draft

AI Tool Comparison: Which One Is Right for Your Research Task?

No single tool dominates everything. Here is an honest breakdown of where each earns its place — and where each falls short.

| Tool | Best Used For | Real Strength | Real Limitation |

|---|---|---|---|

| ChatGPT (GPT-4o) | Brainstorming, outlining, drafting | Large context window, versatile, widely integrated | Confident hallucinator — AI citation checking must always be externally verified |

| Claude (Anthropic) | Long document analysis, nuanced interpretation | Handles very long texts; more likely to flag its own uncertainty | No live web access in base version; still hallucinates on niche topics |

| Perplexity AI | Rapid fact-checking with live sources | Real-time web access; inline clickable citations | Weaker at deep synthesis; surface-level on complex academic topics |

| Scite.ai | Citation verification and evidence weight | Shows supporting vs. contrasting citations; peer-review aware | Subscription required for full access; some field coverage gaps |

| Elicit | Systematic literature reviews, evidence mapping | Purpose-built for research; grounded in indexed papers | Coverage gaps for recent preprints and non-English-language sources |

How to Use AI for Research in Systematic Reviews

Systematic reviews are the most rigorous form of evidence synthesis in academia. They demand exhaustive database searches, strict eligibility screening, data extraction, quality assessment, and bias analysis across every included study. A single well-conducted review can take a research team two to three years.

AI is beginning to make genuine inroads — but in specific, bounded parts of the process.

Where AI currently helps in systematic reviews:

Abstract screening at scale is the clearest win. AI tools can process thousands of abstracts against eligibility criteria and produce a shortlist for human review. What once took months can now take days. For resource-limited research teams running reviews on tight timelines, this is a real and meaningful gain.

Data extraction from standardized reporting formats is another area of emerging reliability. When studies follow consistent structures — as in clinical trial reporting — AI can extract key variables with reasonable accuracy, reducing manual workload significantly.

Where AI still breaks down:

Assessing study quality requires expert judgment about methodological adequacy, risk of bias, and generalizability — none of which current models evaluate reliably. Handling ambiguous eligibility criteria produces inconsistent and sometimes contradictory AI outputs. Resolving heterogeneity across study designs requires domain expertise that cannot be compressed into a prompt.

The Cochrane Collaboration — the global standard-setter for systematic review methodology — has been actively developing formal guidelines for responsible AI integration in systematic reviews. These guidelines are specifically addressing where AI assistance is permissible, what oversight is required at each decision point, and how AI-assisted screening should be documented. Until those standards are finalized and validated at scale, human oversight at every key decision point remains non-negotiable.

A practical entry point: Use AI for a second-pass abstract screening after your own first pass — not as a replacement for it. Compare where AI and human screener decisions diverge. That divergence is itself useful data about where your eligibility criteria may be ambiguous or underspecified.

How to Use AI for Research Without Violating Academic Integrity

Academic integrity in the age of AI is not a simple checklist. It is a set of principles that require ongoing judgment — because the technology is moving faster than the policy frameworks designed to govern it.

Here is what responsible AI use in academic research actually looks like across five non-negotiable principles.

Principle 1 — Verify everything you cannot independently defend.

If a citation, statistic, or factual claim in your submitted work came from AI output and you have not personally verified it in a primary source, it should not be there. You are responsible for every claim in your work. AI cannot share that accountability.

Principle 2 — Disclose AI use specifically and accurately.

“AI tools were used in this research” is not adequate disclosure in most institutional and journal contexts in 2026. Be specific: which tools, for which tasks, at which stages. This is the only honest position — and it is increasingly the required one.

Principle 3 — Do not let AI generate your intellectual contribution.

Using AI to organize literature, translate sources, or draft structural scaffolding is one thing. Using AI to generate your research question, your analysis, your conclusions, or the core of your argument is a different matter entirely. It substitutes pattern-matching for expertise. The value of research lies in what you bring that AI cannot.

Principle 4 — Know where the fabrication risk is highest.

Citations. Specific statistics. Proper nouns. Recent developments. Niche or specialized topics. These are where hallucination is most common and where the consequences of error are most serious. Apply your most rigorous verification precisely at these points.

Principle 5 — Check your institution’s current policy — not last year’s version.

AI policies in academia are changing faster than institutional handbooks are being updated. What was ambiguous six months ago may be explicitly prohibited or explicitly permitted today. Check the current version of your institution’s policy and your target journal’s author guidelines before every submission.

| Risk Type | When It Happens Most | How to Prevent It |

|---|---|---|

| Fabricated citations | AI asked to recall or generate references from training memory | Verify every citation with exact title search in Scholar/PubMed |

| Distorted summaries | AI summarizes without being given the actual paper text | Always paste full text; read original for anything cited directly |

| Training bias in framing | AI defaults to Western/English academic perspectives as baseline | Supplement with regional databases; read originals for context-specific topics |

| Outdated information | AI training cutoff is 12–18 months behind the current date | Check publication dates; search current databases for recent work |

| Undisclosed AI use | Researcher omits AI tools from methods section | Document every tool used; disclose specifically per submission requirements |

Ethical Risks, Bias in Training Data, and What Integrity Actually Demands

The Fabricated Citation Problem Is Bigger Than the Headlines Suggest

Most conversations about AI ethics in research focus on plagiarism. That is a real concern. But fabricated citations are, right now, a more immediate and more consequential problem — and they are far more common than most researchers realize.

When a researcher submits work with a hallucinated citation, they are asserting that evidence exists that does not. In a clinical context, that could influence treatment decisions based on studies that never happened. In policy research, it could shape legislation built on phantom evidence. In education, it models to students that submitting unverified citations is acceptable academic practice.

The responsibility is unambiguous. You cannot outsource citation verification to any AI tool. You have to do it yourself. Every time.

Bias in Training Corpora: The Invisible Filter on Every Output

When a language model is trained on text, its outputs reflect the biases embedded in that text. If training data skews toward certain journals, institutions, and geographic regions, the model will subtly center those perspectives in every response it generates — without flagging that it is doing so.

What this means in practice:

A model asked to summarize “the literature” on a public health topic may draw almost entirely from US, UK, and European studies — even when equally rigorous work exists in Hindi, Swahili, Portuguese, or Mandarin.

A model assessing whether a methodology is “standard practice” may apply North American or European disciplinary norms without acknowledging that alternative methodological traditions exist and are well-established in their own contexts.

A model helping a researcher in Kenya frame a research question may push them toward Western academic conventions that serve neither their research context nor their community’s actual needs.

This is documented across fields — from medicine to economics to education research. It is not theoretical. It is the current state of these systems.

The Deskilling Risk Nobody Talks About Enough

There is a slower, less visible risk that does not generate headlines: what happens to research expertise when AI does more and more of the reading?

Deep reading — sitting with a complex paper, wrestling with its methodology, noticing what it claims and what it quietly assumes — is how researchers build genuine judgment. It is slow. It is sometimes uncomfortable. AI makes it possible to skip most of it.

The researchers who build the strongest expertise are still the ones who read deeply. Use AI to handle the volume. Read the things that matter most yourself. That distinction is not trivial — it is the difference between knowing how to research and knowing how to use a research tool.

How Researchers in Non-English-Speaking Countries Can Use AI to Close the Access Gap

This is one of the most important and consistently underdiscussed opportunities in the conversation about AI in academic research.

For decades, global scholarship has been structured around English. Journals, conferences, funding bodies, citation networks — all of it dominated by a handful of languages and a small number of geographic regions. Researchers who do not read fluent English, or who publish in other languages, have faced a systematic disadvantage that has nothing to do with the quality of their work and everything to do with the structure of the global knowledge system.

AI translation and multilingual models are beginning to change this. Not perfectly. But in ways that genuinely matter.

A researcher in Vietnam can now access a paper published in German and get a working understanding within minutes. A scientist in Brazil studying agricultural crop disease can engage with Chinese-language research at a speed that would have been unthinkable a decade ago. A PhD student in Egypt can engage with Japanese-language water management studies without waiting years for a commissioned translation to be published.

A practical multilingual research access workflow:

- Use a multilingual AI — Claude, GPT-4o, or Gemini — to translate the abstract and key results section first. Assess relevance before investing time in the full paper.

- If the paper is directly relevant, translate the full methodology and findings sections. Have a domain expert review the translation for terminology accuracy where possible.

- Use AI to clarify field-specific terminology that may not translate cleanly, noting explicitly where translation confidence is uncertain.

- When publishing your own work, consider using AI to create structured summaries in additional languages — widening your paper’s accessibility to other researchers facing the same barrier you just overcame.

The caveat matters: machine translation of technical academic content still introduces errors, especially around specialized terminology and culturally embedded concepts. Note when you are working from AI translation in your research process notes. For any paper that is central to your argument, find a domain expert who can verify the translation accuracy before you cite it.

This is not a perfect solution. But it is a genuinely transformative shift in who gets to access global scholarship — and AI-assisted research workflows are making it possible right now.

Where AI-Powered Research Is Actually Heading

RAG Architecture: The Most Important Development for Research Accuracy

Retrieval-Augmented Generation is not a technical buzzword. It is the architectural shift that most meaningfully addresses the hallucination problem in research contexts.

Standard language models generate responses based entirely on patterns encoded during training. They cannot access new information at query time. They cannot verify their own claims against current sources. RAG changes this by adding a retrieval layer: the model queries a document database at runtime, retrieves relevant content, and generates responses grounded in that retrieved material rather than training memory.

For academic research, this means you can configure AI systems to answer only from a curated corpus — your department’s papers, a specific journal’s archives, a regulatory database, a proprietary dataset. Hallucination rates drop substantially when the model is constrained to work from retrieved text. The responses are traceable. The sources are auditable.

Several institutional AI deployments in 2025-2026 are moving in exactly this direction. If you work within a research institution, ask whether a RAG-enabled document assistant is available to your team or in development.

Citation Network Analysis: Mapping How Ideas Actually Travel

One of the more sophisticated capabilities emerging in academic AI is citation network analysis — mapping how ideas flow through the literature over time, not just whether a paper exists.

This approach surfaces which papers influenced which, where paradigm shifts originated, and where a widely-cited finding has been quietly contradicted by subsequent research but never formally retracted. It makes the intellectual genealogy of a claim visible, not just its presence in a database.

arXiv’s preprint ecosystem has become one of the most important early-warning systems for emerging research directions, and AI-assisted citation mapping is increasingly being used to track how ideas move from preprint to peer-reviewed consensus. Scite already does a version of this with its supporting/contrasting citation framework. The next generation will go significantly further.

AI in Systematic Reviews: Compressing Time, Not Replacing Judgment

The most immediate impact of AI on systematic reviews is time compression in the screening phase. What once took months can now take days.

But AI cannot assess study quality, resolve ambiguous eligibility decisions, or handle methodological heterogeneity across study designs. These require expert human judgment. The Cochrane Collaboration’s developing AI guidelines will become the baseline for what is methodologically acceptable in AI-assisted reviews — follow them as they are released.

Autonomous Research Agents: Impressive in Bounded Domains

The trajectory is clearly toward greater autonomy. Research agents that can formulate queries, retrieve papers, synthesize findings, identify gaps, and draft reports with minimal human input at each step are in active development. Early versions exist in enterprise contexts.

They are impressive for bounded, well-defined tasks with clear success criteria. They are unreliable outside those bounds.

For the foreseeable future, the human researcher’s role in directing, evaluating, and taking full responsibility for research outputs is not optional. It is what makes the research valid.

Use AI as the engine. Stay in the driver’s seat. That is not a temporary compromise — it is the responsible position.

Frequently Asked Questions

Can AI replace human researchers?

No. And not in any timeframe that should concern working researchers today. The aspects of research that matter most — generating genuinely novel hypotheses, exercising ethical judgment, interpreting ambiguous findings in context, understanding cultural and political nuance, and taking full accountability for published claims — are not things AI can do reliably or be held responsible for. AI makes researchers significantly faster and better equipped. It does not make their judgment, expertise, or accountability redundant.

How accurate is AI for fact-checking in research?

It varies significantly by topic, tool, and how you use the tool. On well-documented topics with dense training data, accuracy can be reasonably high. On niche topics, recent developments, or specific quantitative claims, error rates are substantial — and the errors are often not obvious from the output. Treat AI as a navigation tool for fact-checking: use it to identify where to look, then verify in primary sources. Never use it as the final word on any factual claim.

Is using AI for academic research considered plagiarism?

This depends on how you are using it and what your institution’s current policy says. Using AI to organize your thinking, generate structural outlines, translate sources, or process background reading is generally not plagiarism. Submitting AI-generated text as your original intellectual contribution, or using AI-generated citations without verification, crosses a serious line. Most academic institutions now require explicit disclosure of AI tool use. Check your institution’s current policy — not last year’s version.

What are the best AI tools for academic research in 2026?

The honest answer: it depends entirely on what you are doing. For systematic literature reviews, Elicit is the most purpose-built option. For citation verification and understanding the evidentiary weight behind a claim, Scite.ai is currently unmatched. For source-linked, rapid fact-checking, Perplexity AI is the most practical option. For long document analysis with explicit uncertainty flagging, Claude handles very large texts and tends to flag the limits of its knowledge more explicitly. For brainstorming, outline generation, and drafting, ChatGPT remains effective for those tasks specifically.

How do you verify AI-generated citations without losing your mind?

Systematically, with a hard rule that applies to every citation without exception. Every citation goes into Google Scholar, PubMed, or Semantic Scholar as an exact title search before it appears in your draft. If the paper does not appear, remove it immediately. If it does appear, verify that the author list, year, and journal match exactly what the AI said. Then check the abstract to confirm the AI’s description of the findings actually reflects what the paper claims. Build this into your workflow as a mandatory step. It is the single most important habit in AI-assisted research.

Conclusion: How to Use AI for Research Without Letting It Use You

Let’s end where this should always end — with the researcher, not the tool.

Knowing how to use AI for research is now a legitimate and genuinely valuable professional skill. It is also one of the most misunderstood skills in practice, because the surface level looks deceptively simple. Type a question. Get an answer. Move on.

But you have seen how complicated the reality underneath actually is. The hallucinations that look exactly like facts. The citations that feel real and do not exist anywhere. The summaries that compress nuance until a carefully hedged finding becomes a confident, universal claim. The training biases that center certain perspectives and quietly marginalize entire bodies of scholarship. The institutional policies still being written in real time while researchers are already submitting AI-assisted work.

None of this means AI is not worth using in research. It absolutely is.

A researcher who builds a disciplined AI-assisted research workflow can engage with more literature, verify more claims, access more global scholarship, and produce more organized work in less time than one who does not. That advantage is real. It compounds over time. And it is worth developing carefully and deliberately.

But it requires staying in control. Verifying what the tool produces. Reading deeply where it matters. Maintaining the kind of rigorous intellectual honesty that is the actual point of research in the first place.

Start small. Pick one stage of your current research process. Apply one workflow from this guide. Notice where AI genuinely helps and where it introduces noise or risk. Build your judgment around real experience with the tools — not around enthusiasm for what they promise to do.

AI is a better shovel. You still have to know where to dig.

The researchers who will thrive in this decade are the ones who master AI without surrendering their judgment.